Single-model AI document processing struggles with complex documents, while structured parsing pipelines improve accuracy, consistency, and scalability. As a result, businesses can rely on automation that actually works beyond controlled demos.

Key Takeaways:

- Single-model AI struggles with complex, variable documents, leading to errors and workflow gaps.

- Synthetic parsing pipelines improve accuracy, speed, and consistency by handling each document element separately.

- Parseur has been using multi-model synthetic pipelines since 2016 to deliver reliable, scalable document automation.

Document automation is evolving. The idea that a single AI model can handle end-to-end AI document processing is proving unreliable, especially for businesses working with invoices, contracts, and high-volume operational documents.

For teams relying on OCR and AI for document processing, this change highlights a key reality: accurate, scalable automation depends on consistently converting documents into structured data. Without that foundation, even the most advanced models struggle to deliver reliable results in production workflows.

The Problem With Single-Model Document Processing

For years, document processing has followed a simple approach: use a single AI or OCR model to extract everything from a document. In theory, this works. In practice, it breaks down quickly.

The core issue is simple: documents are not uniform. A single invoice might contain printed text (vendor name, invoice number), tables (line items with quantities, prices, totals), handwritten notes (delivery instructions), logos and stamps (company branding, approval signatures), and barcodes (tracking numbers).

Each of these elements behaves differently. Some are structured and predictable, while others are highly variable. Treating them all the same creates gaps in data capture.

This is where single-model approaches start to struggle. They are forced to interpret everything the same way, even when different parts of the document require different handling. The result is not just lower accuracy. It is inconsistency. Fields get missed, formats change unexpectedly, and outputs vary from one document to the next.

A global survey by Yahoo Finance found 62.8% of organizations encounter document quality issues frequently or occasionally, with data quality as a top barrier to AI scaling. What looks like a small extraction issue quickly becomes a workflow problem when that data feeds into accounting systems, CRMs, or operational tools.

At low volume, teams can catch and fix these issues manually. But as document volume grows, especially during peak periods, the gaps become harder to manage. Exceptions pile up, rework increases, and automation requires constant oversight just to keep things running.

This is why many document automation projects stall. Not because the technology is not powerful enough, but because it is not reliable enough in real-world conditions. Forrester reports that over 60% of AI pilots fail to expand due to data quality and integration issues.

For teams that depend on documents to run daily operations, the goal is not just extraction. It is consistency, predictability, and confidence that workflows will keep running as formats change and operations expand.

What Is Synthetic Parsing?

Synthetic parsing is an approach to document processing that breaks a document into smaller components and processes each part separately, instead of treating the document as a single block of content.

Traditional systems try to extract everything in one pass. Synthetic parsing takes a different path: it identifies distinct elements within a document (such as text fields, tables, or visual components) and handles each one using the most appropriate method.

In practice, this means isolating key data points like invoice numbers, dates, or totals, separating structured sections like line-item tables, and treating variable or complex elements independently.

The goal is not just better extraction. It is a more reliable structure. By processing documents in parts, synthetic parsing produces cleaner, more predictable outputs that are easier to map into downstream systems. Instead of inconsistent results that require cleanup, teams get structured data that fits directly into their workflows.

This approach also makes document automation more resilient. As layouts change or new formats appear, adjustments can be made at the component level without reworking the entire system. In other words, synthetic parsing changes document automation from a "best guess" process to a more controlled and dependable data pipeline.

Enter Synthetic Parsing Pipelines

IBM's 2026 AI trends report points to a more practical approach to document automation. Instead of relying on a single model to process an entire document, the approach moves toward breaking documents into parts and handling each component in a more structured way:

- Text blocks routed to a text extraction model optimized for OCR

- Tables processed separately to preserve rows, columns, and totals

- Images and logos handled by computer vision models for stamps and signatures

- Handwriting sent to specialized recognition models

Each element is processed based on its behavior, rather than forcing a single model to interpret everything uniformly.

This move is not just about model performance. It reflects a broader shift toward building more reliable document workflows. By separating how different data types are handled, teams get more consistent outputs, fewer missed fields, and less variation from one document to the next.

It also reduces unnecessary processing overhead. Instead of running every document through a single heavy model, each component is handled more efficiently, improving speed and scalability as volume grows. The result is not just better accuracy but also more predictable data and workflows that hold up in real-world conditions, where formats change, documents vary, and consistency matters more than one-off results.

Why This Matters For Businesses In 2026

For teams evaluating modern document automation, this change reflects a broader shift in what "good" looks like in production.

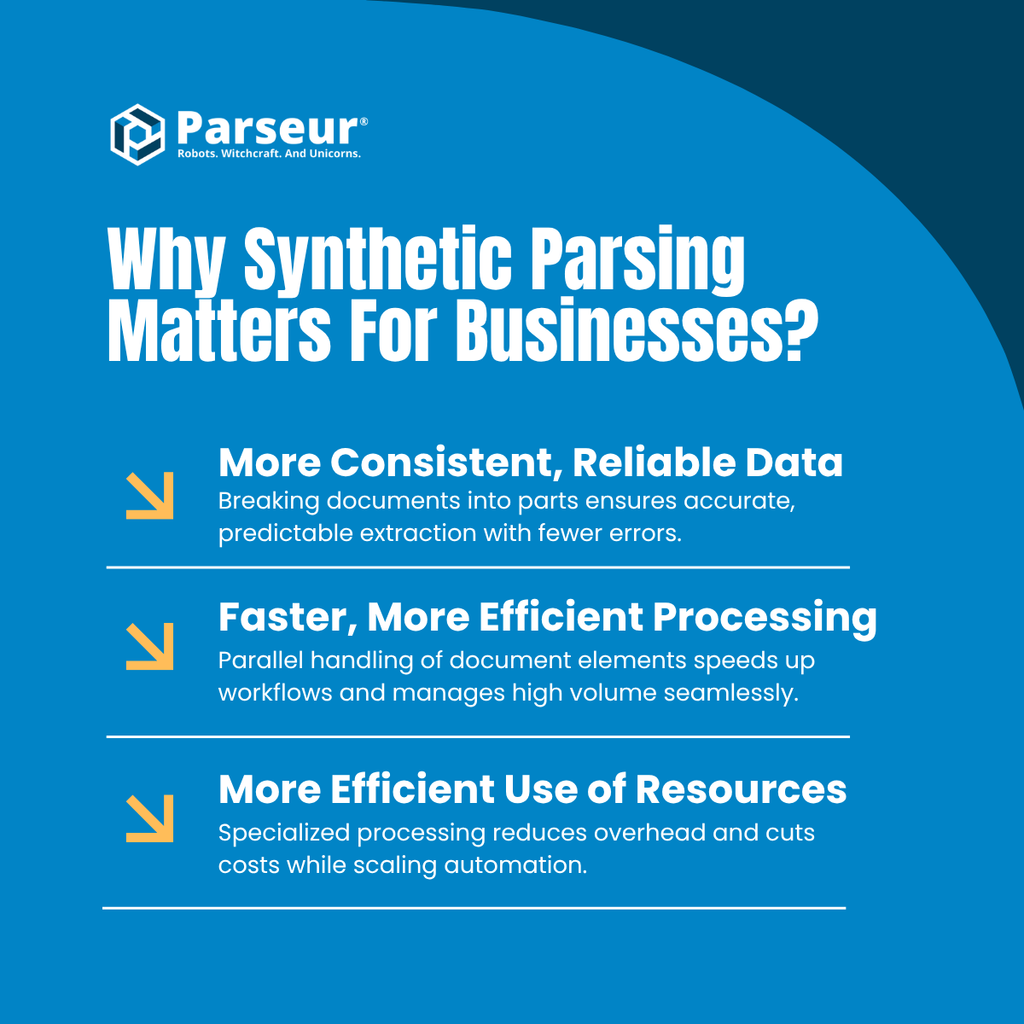

1. More Consistent, Reliable Data

Single-model approaches tend to produce variable results, especially with complex or changing document formats. Breaking documents into components leads to more consistent extraction across fields like totals, line items, and key identifiers. In practice, this means fewer missed fields, fewer exceptions, and less manual correction before data can be used downstream.

Single-model approaches hit a ceiling because no single model can be perfect at everything. Synthetic parsing pipelines use specialized models, each optimized for specific tasks.

2. Faster, More Efficient Processing

Handling different parts of a document separately also improves workflow performance. Instead of processing everything in a single pass, tasks can be handled more efficiently according to their complexity. For teams dealing with high volumes, this translates to faster turnaround times and the ability to handle spikes without workflows slowing down or breaking.

Example workflow:

- Old way (single model): Process entire 10-page invoice → 30 seconds

- New way (synthetic pipeline): Process text, tables, images in parallel → 6 seconds

3. More Efficient Use of Resources

Not every part of a document requires the same level of processing. A structured approach ensures that simpler elements are handled efficiently, while more complex sections get the attention they need. This reduces unnecessary processing overhead and helps teams scale automation without costs increasing unpredictably. Parallel pipelines reduce end-to-end processing cost by 60-70% for multi-element documents, according to Zen van Riel of GitHub.

The Bigger Change

This is not just a technical improvement. It is a move toward more dependable document workflows. For businesses, the goal is not to push accuracy metrics in isolation. It is to ensure that extracted data is consistent, usable, and reliable enough to power real operations, from accounting and finance to supply chain and customer workflows.

Read more about the accuracy, speed, and cost benefits of AI document processing: AI Invoice Processing Benchmarks 2026.

The Parseur Approach - Reliable Document Automation From Day One

At Parseur, this is not a new concept. We have been using a hybrid, multi-model approach from the start. Instead of forcing one model to handle every document, we route each element to the tool that handles it best.

Our synthetic pipeline:

- AI-powered extraction for structured, predictable fields like invoice numbers, dates, and totals

- OCR models for scanned documents and images

- AI parsing for variable layouts and more complex documents

- Table detection to preserve rows, columns, and multi-line items

Why it works:

- Templates deliver near-perfect accuracy on fixed fields at minimal cost

- OCR handles scanned documents consistently

- AI models tackle variable content without breaking workflows

- Table detection ensures critical line-item data stays intact

How to Evaluate Document Processing Tools In 2026

If IBM's prediction holds (and all signs point to it), here is what to look for when choosing a document automation solution:

Red flags: single-model approaches

- "Our AI model handles everything."

- "Just upload documents, and our model learns."

- No mention of OCR, AI parsing, or specialized handling for tables and handwriting

- Black-box pricing with no transparency on document complexity

Green flags: synthetic pipeline approaches

- Multiple extraction methods: AI, OCR, table detection, and more

- Clear logic for routing each element to the model that handles it best

- Transparent pricing based on document type or complexity

- Built for consistency and reliability in real workflows, not just demos

What Happens Next?

IBM's prediction is not speculation. The market is already moving in this direction.

Q2 2026 - Vendor consolidation: Single-model vendors will likely build synthetic pipelines (a costly and time-consuming upgrade), get acquired by platforms with multimodal infrastructure, or fade from relevance if they cannot adapt.

Q3-Q4 2026 - Enterprise migration: Organizations tied to single-model contracts will run proofs-of-concept with vendors using synthetic pipelines, compare results for accuracy, speed, and reliability, and switch providers or demand upgrades to more robust workflows.

2027 - Industry standard: Synthetic parsing pipelines become the default for enterprise document automation. Single-model processing will be seen as outdated, much like a reliance on fax machines.

The Bottom Line

If your document automation vendor still relies on a single AI model for everything, you are likely paying more for compute than necessary, accepting inconsistent or lower accuracy, and slowing down document workflows compared to competitors.

The move to synthetic parsing pipelines is not optional. It is inevitable. The real question is whether your team will adopt it early and gain reliable, scalable automation, or wait until they are playing catch-up.

Last updated on