Vision AI document processing is transforming how businesses extract, understand, and automate data from documents. Powered by vision language models, it goes beyond traditional OCR by interpreting layout, context, and relationships between elements, delivering structured, reliable data across thousands of documents.

Key Takeaways:

- Vision AI is becoming the new standard for document processing, outperforming OCR and IDP across complex, real-world documents.

- Businesses can reduce document processing costs by 75 to 92% by switching from manual workflows or OCR-based systems to vision AI.

- Platforms like Parseur leverage vision AI to deliver fast, accurate, and scalable document automation without templates or manual setup.

What Is Vision AI Document Processing?

Vision AI document processing is a new approach to extracting and understanding data from documents using vision language models (VLMs). These AI systems can interpret both text and visual structure simultaneously.

The Document AI market, which includes VLM-based processing, is projected to grow from USD 14.66 billion in 2025 to USD 27.62 billion by 2030 at a CAGR of 13.5%.

Unlike traditional methods, which treat documents as plain text, vision AI understands documents more like humans do: by analyzing layout, context, and relationships among elements. This makes it a major step forward in AI document understanding, especially for complex, real-world documents.

Vision AI vs OCR vs IDP

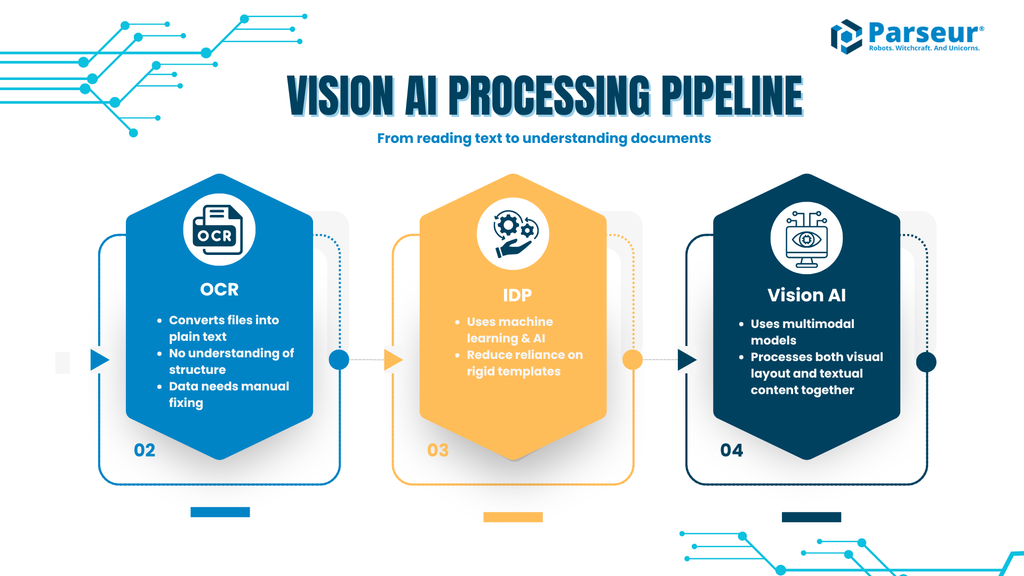

To understand the evolution of document processing, it helps to distinguish between three layers of technology.

Traditional OCR (Optical Character Recognition)

OCR converts scanned documents, PDFs, or images into machine-readable text. Modern OCR engines can also detect layout elements such as lines, tables, and text blocks. However, OCR primarily focuses on character recognition. It does not inherently interpret the meaning of the content or how different fields relate to each other.

IDP (Intelligent Document Processing)

IDP builds on OCR by adding layers of machine learning, document classification, field extraction, and validation. Many IDP systems reduce reliance on rigid templates and can handle semi-structured documents such as invoices and receipts. However, they still typically rely on training data, configuration, or predefined logic to maintain accuracy, especially when document layouts vary significantly or when handling highly unstructured content.

Vision AI Document Processing (Vision-Language Models)

Vision AI introduces a newer approach using multimodal models that process both visual layout and textual content together. These systems can infer context, for example identifying totals in invoices, mapping relationships in tables, or recognizing signatures, without relying heavily on predefined templates. Instead of treating text and structure separately, vision AI models reason over the document as a whole.

This change moves document processing from "reading text" to understanding documents as structured data sources.

How vision language models work

Vision language models such as Open AI GPT, Anthropic Claude, and Google Gemini combine computer vision and natural language processing into a single system. Instead of running separate tools for OCR, layout detection, and parsing, these models process the entire document at once.

At a high level, they work by:

- Analyzing the visual structure - identifying sections like headers, tables, images, and form fields

- Extracting text in context - not just what the text says, but where it appears and what it relates to

- Understanding relationships - linking fields (for example, matching line items with totals, associating labels with values)

- Generating structured output - returning clean, usable data (JSON, key-value pairs, tables)

This allows a single system to handle documents that previously required multiple tools and layers of logic.

Why is 2026 the turning point for vision AI?

Vision AI document processing has existed in early forms for years, but 2026 marks a clear inflection point for three reasons.

1. Production-level accuracy

Modern vision language models now achieve significantly higher accuracy on complex documents, especially those with mixed layouts, tables, and handwritten elements. Fine-tuned VLMs reach up to 99% accuracy when paired with human-in-the-loop workflows, as shown in Hyperscience's production setups for invoices and IDs. This surpasses traditional OCR baselines.

2. Rapid cost reduction

Running large models used to be expensive, limiting adoption. Improved model efficiency and selective processing (using advanced models only where needed) have reduced costs sufficiently for high-volume business use cases.

3. Reduced complexity

Older systems required templates, rules, and constant maintenance. Vision AI reduces that overhead by automatically adapting to layout changes and new formats. This makes it viable for scaling document workflows across teams and departments.

Together, these changes make vision AI document processing not just an experimental technology but a practical solution for production workflows.

From extraction to understanding

The biggest change is not just better OCR. It is a move toward true AI document understanding.

Instead of asking "Can we extract this field?", teams can now ask "Can we reliably turn this document into structured, usable data?"

That distinction matters. Because in real workflows like finance, operations, logistics, and HR, consistency and reliability matter more than one-off accuracy.

How Vision AI Works For Documents

Vision AI document processing is powered by a new class of systems designed for multimodal understanding, the ability to interpret text, layout, and visual elements at the same time.

This is what sets it apart from traditional OCR and even earlier AI document processing tools. Instead of breaking documents into separate steps (OCR, then layout detection, then parsing), vision AI handles everything in a unified process, resulting in more accurate and reliable document understanding.

Multimodal understanding: text, layout, and visual context

Traditional systems process documents in layers. First, OCR extracts text. Then, other tools try to reconstruct the structure. This often leads to errors because the system loses context along the way.

Vision language models take a different approach. They analyze the entire document at once, combining:

- Text content (words, numbers, symbols)

- Layout structure (headers, tables, sections, spacing)

- Visual elements (logos, signatures, stamps, formatting cues)

For example, when processing an invoice, a vision AI model does not just read "Total: $1,250." It understands that "Total" is a label, "$1,250" is the associated value, and their proximity and alignment indicate a relationship.

This ability to interpret documents holistically is what makes vision AI document processing far more reliable than older methods.

Context-aware extraction (beyond text recognition)

One of the biggest limitations of OCR is that it treats text as isolated characters. Traditional OCR typically achieves 95-99% accuracy on clean, printed text but drops to 60-70% on handwriting or complex layouts, according to Happy2Convert. Vision AI, on the other hand, performs context-aware extraction.

This means it does not just extract text. It understands meaning and relationships between elements. For example, in a table it links quantities to prices and calculates totals correctly. In forms it matches labels with their corresponding values. In contracts it identifies clauses and associates them with sections.

Instead of outputting raw text, vision AI produces structured, usable data. This is critical for real-world workflows. A misplaced number or misinterpreted field can break downstream systems. Context-aware extraction reduces these errors by preserving how data is organized and related.

Trained on millions of document variations

Vision-language models are trained on massive datasets that include millions of documents, such as invoices, receipts, contracts, forms, and reports.

This broad training enables them to handle different layouts without templates, adapt to new formats automatically, and recognize patterns across industries and document types. Even if two invoices look completely different (different vendors, formats, or languages), the model can still identify key elements like totals, dates, and line items.

This eliminates the need for constant retraining or manual rule updates, which were major limitations in earlier document automation workflows.

Real example: Invoice processing step-by-step

Here is how vision AI processes a typical invoice in practice.

Step 1: Document input. An invoice arrives as a PDF via email or upload.

Step 2: Visual analysis. The model scans the entire document, identifying header sections (vendor info, invoice number, date), tables (line items), and summary fields (subtotal, tax, total).

Step 3: Text and context extraction. Instead of extracting text line by line, the model captures: vendor name from the header or logo area, invoice number associated with the correct label, line items grouped into structured rows, and total amount correctly identified even if formatting varies.

Step 4: Relationship mapping. The model connects related data points: quantities to unit prices to totals, dates to payment terms, and line items to the overall invoice summary.

Step 5: Structured output. The final output is clean, structured data in JSON or key-value pairs, with table data preserved as rows and columns, ready for direct integration into accounting or ERP systems.

This entire process happens in seconds, without manual intervention or predefined templates.

What Vision AI Can Do That Traditional OCR Struggles With

While OCR remains a foundational technology in document processing, vision AI introduces capabilities that go beyond text recognition, particularly in scenarios involving visual context, ambiguity, and variability.

Here are key areas where vision AI provides a clear advantage:

- Checkbox and visual state detection: Determine whether a checkbox is checked, unchecked, or indeterminate, something OCR alone cannot reliably infer.

- Deep layout and formatting awareness: Interpret visual cues such as font size, spacing, alignment, and color to understand document hierarchy and structure.

- Image-level understanding: Extract meaning from non-textual elements such as stamps, signatures, diagrams, or embedded photos.

- Improved handwriting recognition: Handle a broader range of handwriting styles (cursive, print, mixed), especially in noisy or real-world documents.

These capabilities stem from vision AI's ability to process both text and visual context simultaneously, rather than treating them as separate layers.

Key Capabilities of Vision AI in Document Processing

Modern vision AI systems extend document processing beyond extraction into interpretation. They are designed to handle the variability, ambiguity, and imperfections found in real-world documents.

1. Handwriting Recognition at Scale

Handwriting has historically been a weak point for OCR systems, which are optimized for clean, printed text.

Vision AI models significantly improve performance by leveraging contextual understanding. Rather than recognizing characters in isolation, they interpret words and phrases within the broader context of the document.

This enables more reliable extraction from handwritten notes on invoices or forms, delivery instructions and annotations, and signatures and marginal comments in contracts.

While accuracy varies with document quality and language, recent benchmarks show substantial improvements in handwriting recognition performance over traditional OCR pipelines.

2. Complex Table Extraction

Tables present structural challenges that go beyond text recognition. They often include merged or split cells, multi-line entries, nested hierarchies, and multi-page continuity.

Traditional OCR-based systems may detect text within tables, but frequently lose the relationships between rows and columns. Vision AI addresses this by analyzing tables as visual structures, enabling it to preserve row-column relationships, handle irregular or merged layouts, and maintain continuity across pages.

This is particularly valuable for invoice line items, financial reports, and operational data embedded in PDFs. The output is structured data that requires significantly less post-processing.

3. Advanced Layout Understanding

Documents communicate meaning not only through text, but also through layout. Vision AI models are trained to interpret spatial and visual patterns, allowing them to:

- Identify document sections (headers, footers, body)

- Determine reading order in multi-column layouts

- Separate metadata from primary content

- Detect recurring elements such as page numbers or disclaimers

For example, a value at the bottom of a document can be interpreted as a total, a logo can help identify the document's source, and a footer disclaimer can be excluded from the extraction logic. This level of layout awareness improves consistency across documents with varying formats.

4. Multi-Language and Mixed-Language Support

Traditional document processing systems often require language-specific configurations or models.

Vision AI systems, particularly those based on large multimodal models, are trained on diverse datasets and can generalize across languages more effectively. This enables extraction from documents in multiple languages, recognition of non-Latin scripts (such as Chinese, Arabic, or Cyrillic), and handling of mixed-language documents on the same page.

While performance can still vary across languages and scripts, vision AI reduces the need for manual configuration in global workflows.

5. Robustness to Real-World Document Quality

In production environments, documents are rarely clean or standardized. Common issues include low-resolution scans, skewed or rotated images, faded or low-contrast text, and mobile-captured photos.

OCR systems can degrade significantly under these conditions. Vision AI improves resilience by incorporating visual context and probabilistic reasoning. It can correct orientation and alignment, infer missing or unclear characters, and extract usable data from degraded inputs. This reduces preprocessing requirements and increases reliability in high-volume pipelines.

From Capabilities to Operational Impact

Individually, these capabilities are meaningful. Combined, they enable a shift toward more adaptive and resilient document processing systems.

Instead of relying heavily on fixed templates or rigid rules, teams can process documents that vary in format, include handwritten or visual elements, and contain inconsistencies or quality issues.

In practice, most production systems still combine OCR, IDP techniques, and vision AI. However, vision AI introduces a critical layer of contextual understanding, making it possible to extract not just text, but structured, usable data, more consistently across real-world scenarios.

For a deeper look at how single-model approaches compare to multi-model pipelines, see our breakdown of synthetic parsing and why it matters.

Vision AI Use Cases: Real-World Document Processing Applications

The true value of vision AI document processing becomes clear when applied to real business workflows. Across industries, teams are moving beyond basic OCR toward systems that deliver reliable AI document understanding, even when documents vary in format, structure, and quality.

1. Invoice Processing

Invoice automation has traditionally required vendor-specific templates or model retraining for new layouts. Even modern IDP systems often need configuration or supervised learning to maintain accuracy across vendors.

Vision AI removes much of this dependency. It can identify key fields (invoice number, total, date) based on context rather than position, extract line items from visually complex or inconsistent tables, and adapt to new vendor formats without prior setup.

Traditional OCR and IDP cannot natively process completely unseen invoice layouts without configuration, training, or rules. Vision AI can.

Impact: Reduced onboarding time for new vendors, lower maintenance overhead, and more scalable accounts payable automation.

2. Contract Analysis

Contracts are inherently unstructured. Clauses vary in wording and placement, key information is distributed across long documents, and structure is semantic rather than visual.

Traditional systems require predefined fields, clause libraries, or manual annotation workflows. Vision AI can instead identify clauses based on meaning (such as termination or payment terms), extract key dates even when phrased differently, and detect signatures and approval indicators visually.

Impact: Faster contract review, reduced reliance on manual tagging, and more flexible legal data extraction.

3. Documents Combining Text, Handwriting, and Visual Elements

Many real-world documents include handwritten notes, stamps or seals, signatures, and mixed printed and scanned content. OCR pipelines typically separate handwriting into a different process or fail when text quality degrades.

Vision AI processes these elements within a single model, allowing it to interpret handwriting in context, recognize stamps or visual markers as meaningful signals, and associate annotations with the correct sections of a document.

Impact: More complete data capture, fewer edge-case failures, and better handling of real-world documents.

4. Table Extraction with Irregular or Unknown Structures

Table extraction is a known limitation in OCR-based systems when layouts are inconsistent, cells are merged or nested, or tables span multiple pages. IDP systems can improve this, but often require predefined table structures or labeled training data.

Vision AI approaches tables as visual relationships rather than fixed schemas. It can reconstruct row-column relationships dynamically, interpret irregular layouts without prior examples, and maintain continuity across pages.

Impact: Reliable extraction of financial and operational data, less manual cleanup, and better downstream usability.

5. Understanding Visual Meaning Beyond Text

Some critical document elements are not textual at all: checkboxes, highlights, logos, diagrams, and formatting cues like bold, spacing, and positioning. OCR ignores these entirely. IDP may capture them, but only if explicitly programmed.

Vision AI can determine whether a checkbox is checked, use layout cues to infer importance (such as totals or headings), and interpret visual hierarchy to understand document structure.

Impact: More accurate field identification, better contextual understanding, and reduced reliance on rules.

How Parseur Uses Vision AI For Document Automation

At Parseur, vision AI is part of a broader multi-model pipeline designed for production reliability. Rather than relying on a single approach, Parseur routes each element of a document to the method that handles it best: AI-powered parsing for variable layouts, OCR for scanned documents, and table detection to preserve row and column relationships.

This means businesses get the accuracy benefits of vision AI, combined with the consistency and cost efficiency of a structured pipeline. New document formats are handled automatically, without templates or manual configuration. And as layouts change, the system adapts without breaking existing workflows.

Common Challenges in Vision AI (And How to Solve Them)

While vision AI document processing offers significant advantages in accuracy, speed, and cost, it is not without challenges. Understanding these limitations and how to address them is key to successfully implementing AI document understanding at any throughput level.

1. Hallucination Risk (And how to mitigate it)

Like all AI systems, vision language models can occasionally generate incorrect or hallucinated outputs, especially when document quality is poor or data is missing. For example, a model might infer a value that is not clearly present, misinterpret ambiguous handwriting, or fill in gaps based on context rather than actual data.

How to mitigate this: Use confidence scores to flag uncertain extractions. Apply validation rules (for example, totals must match line items). Set up human review workflows for critical fields. Combine vision AI with structured logic (hybrid pipelines).

The goal is not to eliminate hallucinations entirely, but to catch and control them before they impact downstream systems.

2. Data Privacy and Compliance (EU AI Act and Beyond)

Processing sensitive documents, such as financial records, contracts, or medical data, raises important privacy and compliance concerns. Regulations like the EU AI Act and GDPR require businesses to ensure secure data handling and storage, transparency in how AI systems process data, and control over where data is processed.

Compliance is not optional. It must be built into the workflow from the start.

How to address this: Choose vendors with enterprise-grade security certifications. Use data encryption in transit and at rest. Consider on-premise or private cloud deployments when needed. Implement access controls and audit logs.

3. Integration with Legacy Systems

Many organizations still rely on legacy systems that were not designed to work with modern AI tools. This can create challenges when integrating vision AI document processing into existing workflows.

Common issues include limited API support, rigid data formats, and manual processes that are difficult to automate.

Solutions: Use automation platforms (Zapier, Make, Power Automate) as a bridge. Export structured data into compatible formats (CSV, Excel, JSON). Start with incremental integrations rather than full system overhauls. A phased approach allows teams to modernize workflows without disrupting operations.

4. Change Management and Team Adoption

Even the best technology can fail without proper adoption. Teams used to manual processes may resist automation or struggle to trust AI outputs.

Common challenges include lack of familiarity with automation tools, fear of errors or job displacement, and unclear workflows during transition.

How to solve this: Provide hands-on training and clear documentation. Start with low-risk workflows to build confidence. Show measurable wins (time saved, error reduction). Keep humans in the loop during early stages.

Successful implementation is not just technical. It is organizational.

Vision AI Is Redefining Document Processing In 2026

Vision AI document processing marks a fundamental move from extracting text to truly understanding documents. With near-human accuracy, significantly lower costs, and the ability to handle complex, real-world formats, it is rapidly replacing traditional OCR and IDP systems.

As document volumes grow and workflows become more complex, businesses need solutions that are not only accurate but also scalable and adaptable. Vision AI delivers on all three fronts, reducing manual work, improving data quality, and enabling end-to-end automation.

Document processing is no longer just a back-office task. It is becoming a strategic advantage. Companies that adopt vision AI early will be better positioned to streamline operations, cut costs, and build more intelligent, data-driven workflows.

Last updated on