Vision AI and OCR both extract data from documents, but they differ significantly in how they handle real-world complexity. Understanding when to use each can have a major impact on accuracy, cost, and scalability.

Key Takeaways:

- Vision AI delivers higher accuracy by understanding context, layout, and meaning, not just text.

- OCR works best for clean, consistent, high-volume documents with fixed formats.

- Tools like Parseur make it easy to apply Vision AI in real workflows without templates or complex setup.

Your company processes 500 invoices per month. Some are clean PDFs from major vendors. Others are faded scans from small suppliers. A few have handwritten notes. You need to automate extraction.

Do you use vision AI or OCR?

This is where most teams get stuck. On paper, both technologies promise the same outcome: turning documents into structured data. But in real workflows, the difference between these approaches becomes very clear, especially when formats vary, quality drops, or volume increases.

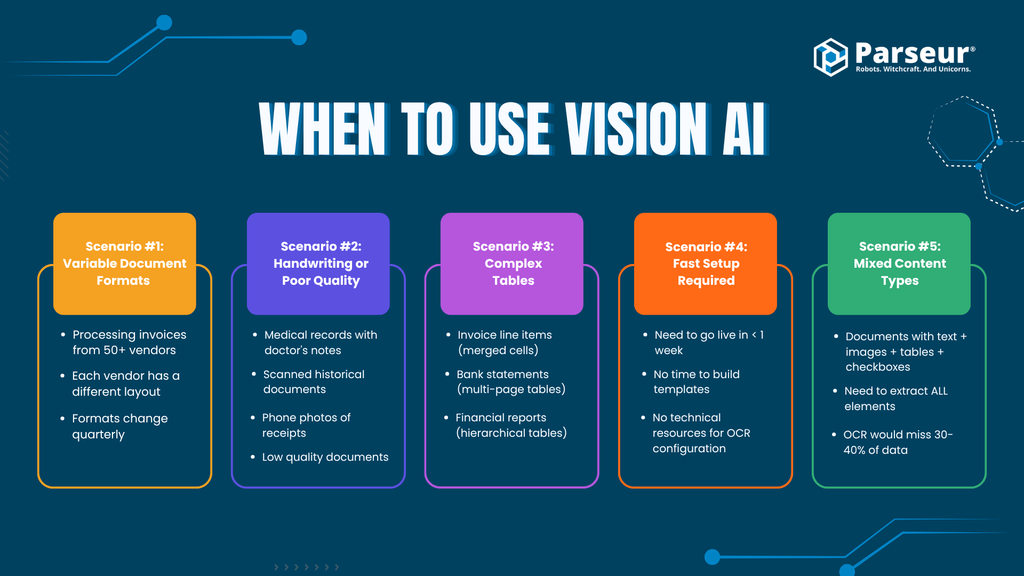

Use Vision AI when:

- Document formats vary (different layouts, vendors, templates)

- Documents include handwriting

- Quality is inconsistent (scans, photos, faded documents)

- Tables are complex (merged cells, multi-page, borderless)

- You want minimal maintenance over time

Use Traditional OCR when:

- Documents are identical (same form, every time)

- The format never changes (for example, standardized government forms like W-9 or 1099)

- Quality is perfect (high-resolution PDFs, clean scans)

- The budget is extremely limited

- You are processing millions of identical documents

Use Both (Hybrid) when:

- 80% of documents are simple and 20% are complex

- You want to optimize cost (OCR for simple cases, Vision AI for edge cases)

This guide breaks down accuracy, speed, cost, and complexity across all three approaches so you can decide with confidence, based on real-world performance.

OCR vs Vision AI: The Core Difference

When comparing vision AI and OCR, it helps to understand what each technology actually does. Both aim to extract data from documents, but they approach the problem very differently.

Traditional OCR (Optical Character Recognition)

OCR is like a kindergartener learning to read. It recognizes individual characters (A, B, C, 1, 2, 3), reads left-to-right and top-to-bottom, does not understand context or meaning, and often needs templates to know where fields are located.

This is where OCR shows its limits. It can read text, but it does not understand what that text represents.

How OCR works:

- Scan document and convert to pixels

- Identify character shapes ("This looks like an A")

- Convert shapes to text ("Invoice #12345")

- Output raw, unstructured text

OCR is accurate for clean text but fragile when structure or layout changes.

Vision AI (Vision Language Models)

Vision AI is like a college student reading a textbook. It understands what it is reading, not just what the letters spell. It understands layout, structure, and meaning together, recognizes document types automatically (invoice, receipt, form), identifies relationships between elements, and adapts to format changes without constant retraining.

The key shift is this: Vision AI does not just read text. It interprets the document, using vision language models to process both text and visual structure simultaneously.

How Vision AI works:

- Scan document and build a visual representation

- Understand structure ("This is an invoice with a header, table, and totals section")

- Extract with context ("Invoice #12345 is in the header, total is $1,234.56")

- Output clean, structured, ready-to-use data

The Core Difference at a Glance

| OCR | Vision AI | |

|---|---|---|

| Reads | Letters | Meaning |

| Approach | Character recognition | Document understanding |

| Format handling | Template-dependent | Context-aware |

The difference between these technologies is not just accuracy. It is capability. That distinction becomes critical as soon as documents are no longer perfectly clean and predictable.

Vision AI vs OCR: 5 Critical Dimensions

1. Accuracy

OCR works well on clean documents, but small issues with fonts, spacing, and scan quality introduce errors. In most real-world scenarios, handwriting is where OCR breaks down quickly, while Vision AI maintains high accuracy through contextual understanding.

OCR misreads characters. Vision AI uses context (such as expected currency format) to correct them.

2. Speed (Including Human Time)

At first glance, OCR seems faster. Processing time is roughly 5 to 30 seconds per document for OCR versus 10 to 20 seconds for Vision AI. But raw processing time does not tell the full story.

| Stage | OCR | Vision AI |

|---|---|---|

| Extraction | Fast | Moderate |

| Error correction | 5 to 15 min/doc | 1 to 2 min/doc |

OCR shifts the workload to humans. Vision AI reduces it.

3. Cost (Total Cost of Ownership)

OCR often requires licenses, infrastructure, and setup. Vision AI-powered tools like Parseur use flexible, usage-based pricing. But the hidden costs matter most.

With 500 documents per month:

- OCR review time: 10 minutes per doc → 83 hours per month

- Vision AI review time: 2 minutes per doc → 16.7 hours per month

Time saved: roughly 66 hours per month. In any cost comparison, labor costs quickly outweigh software costs. Poor data quality costs organizations an average of USD 12.9 million per year.

4. Setup and Maintenance

OCR requires templates that define where each field lives. Vision AI does not. When a vendor changes their invoice layout, OCR breaks and requires 2 to 4 hours to rebuild the template. Vision AI requires no action.

As McKinsey notes, 45% of work activities could be automated using already demonstrated technology. Template maintenance is exactly the kind of repetitive overhead that holds automation back.

5. Flexibility

OCR limitations: requires a template per document type, breaks when layouts change, limited handwriting support, struggles with complex tables, and has no contextual understanding.

Vision AI advantages: no templates required, adapts to layout changes, handles handwriting, extracts complex tables accurately, and understands and validates context.

Across these five dimensions, the pattern is clear. OCR performs well in controlled, predictable environments. Vision AI performs better in real-world, variable conditions. And since most businesses deal with variability across formats, vendors, and document quality, that distinction becomes critical.

5 Things Vision AI Can Do That OCR Cannot

The gap between these technologies is not just about accuracy. Certain document tasks simply break traditional OCR, no matter how well you tune it.

1. Checkbox Recognition

Many real-world documents rely on visual elements like checkboxes (☑ Yes, ☐ No). OCR either ignores these symbols or reads them as random characters.

Vision AI recognizes checkbox patterns as visual elements, detects checked, unchecked, or crossed states, and converts them into structured outputs (true/false, Yes/No). A medical intake form with 20 checkboxes: OCR captures roughly 5 correctly, Vision AI captures all 20 accurately.

Use cases: medical forms, insurance applications, compliance checklists, surveys.

2. Deep Layout Understanding

Documents use layout to convey meaning through bold headers, indented sub-items, and multi-column structures. OCR reads everything linearly, which breaks structure. Vision AI detects font size, bolding, and spacing, recognizes sections and sub-sections, and preserves relationships between data.

3. Image Understanding

Real-world documents include logos, stamps, signatures, and diagrams. OCR either ignores these elements or converts them to meaningless text. Vision AI detects visual objects, interprets diagrams, and extracts meaning from non-text elements.

Examples:

- A red "APPROVED" stamp: OCR misses it, Vision AI detects it and extracts the text and placement

- A contract signature page: OCR outputs unreadable scribbles, Vision AI detects signature presence and links it to the signer's printed name

Use cases: legal documents (stamps, signatures, seals), real estate (floor plans), insurance (damage photos in claims).

4. Handwriting Understanding (Contextual)

Handwriting is inconsistent and ambiguous: letters vary by person, characters overlap or distort, and context is required to interpret meaning. OCR relies on pattern matching, which is easily broken.

Vision AI understands the document rather than just reading characters. It analyzes surrounding words, learns common patterns, and validates against expected formats (names, dosages, dates).

Example from a doctor's prescription, handwritten "Lisinopril 10mg":

- OCR output: "1isinopri1 10 mg"

- Vision AI output: "Lisinopril 10 mg"

Vision AI succeeds because it recognizes drug naming patterns, dosage formats, and context within medical documents.

Use cases: medical records (prescriptions, notes), legal forms, education (exams, applications).

5. Multi-Modal Reasoning

Modern documents combine text, tables, images, and diagrams. OCR treats each of these separately and inconsistently. Vision AI processes the entire document at once, understands relationships across text, images, and tables, and cross-validates information for consistency.

Example with an invoice containing a product image, description, and price in a table:

- OCR extracts each part separately with no linkage

- Vision AI connects image, description, and price to ensure accuracy

Advanced AI-based document processing systems can achieve up to 99.9% accuracy in data extraction.

Use cases: e-commerce (product catalogs with images and specs), scientific documents (charts and text explanations), technical manuals (diagrams and instructions).

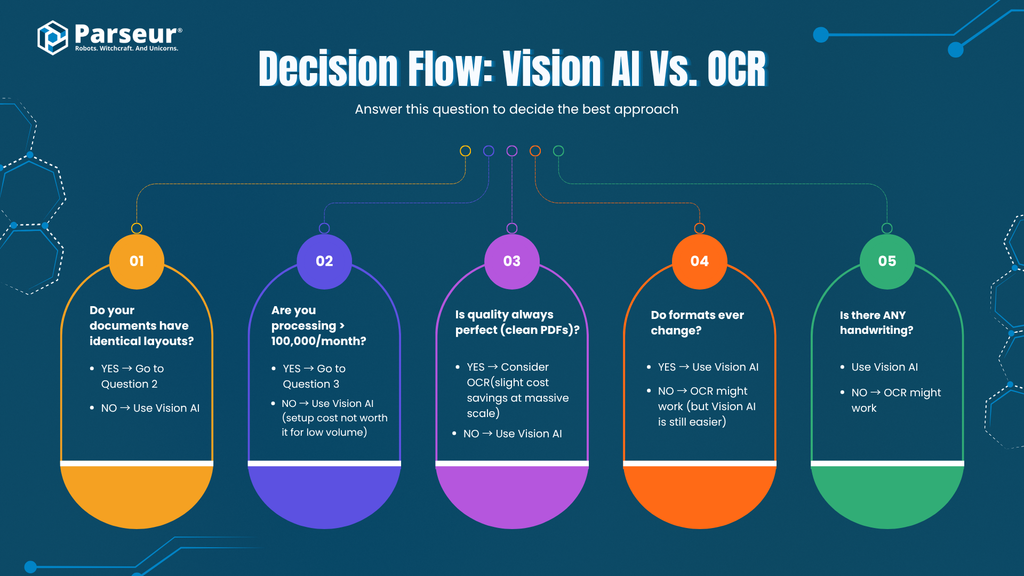

The Decision Framework

Scenario 1: Identical Documents at Massive Scale

Processing 1 million or more standardized documents (such as W-2 or 1099 forms) where the format never changes.

Why OCR wins: The template setup cost spreads across millions of documents. Fixed layout means consistent extraction. Per-document cost is lower at extreme volume.

Scenario 2: Perfect Quality, Simple Structure

Clean, high-resolution PDFs with simple forms and fixed fields. No handwriting, no complex tables, minimal layout variation.

Why OCR wins: No need for contextual understanding. High accuracy with minimal configuration. Faster to implement if templates already exist.

Scenario 3: Extremely Limited Budget

Using open-source OCR (such as Tesseract) with budget constraints that prevent API-based tools, where manual review is part of the process.

The tradeoff: Lower cost comes with higher manual effort. Simpler tooling means more error correction. No API costs come with higher operational overhead.

When Neither OCR Nor Vision AI Is Needed

There is a category of documents that does not require either technology: native text documents, such as emails, digital HTML invoices, and text-based PDFs.

When a document arrives as an email or a natively digital PDF, the text and formatting information are already present in the file. There are no pixels to scan, no characters to recognize, and no visual reconstruction needed. The data can be extracted directly from the underlying structure.

This is an important distinction. Applying OCR or Vision AI to a document that already contains accessible text adds unnecessary processing overhead. For these cases, a purpose-built parser reads the existing text and structure directly, which is faster, cheaper, and more reliable.

For example, if a vendor sends an invoice as an HTML email, the line items, totals, and dates are already encoded as text in the email body. An email parser can extract them directly without converting the document into pixels first.

Knowing when you do not need OCR or Vision AI is just as valuable as knowing when you do.

When to Use a Hybrid Approach (Best of Both)

The most practical setup for most businesses is a combination of approaches, using each where it performs best.

The 80/20 approach

- 80% of documents: simple, clean, predictable → OCR

- 20% of documents: complex, inconsistent, or low quality → Vision AI

| Step | Action | Outcome |

|---|---|---|

| 1 | Route simple documents to OCR (~$0.01/doc) | Fast, low-cost processing |

| 2 | Route complex documents to Vision AI (~$0.05/doc) | High accuracy on edge cases |

| 3 | Combine outputs into one workflow | Consistent structured data |

| 4 | Monitor and adjust routing rules | Optimize over time |

When hybrid makes the most sense

- Mixed document quality (some clean, some messy)

- Multiple vendors or formats

- High volume with cost sensitivity

- Need to balance efficiency and accuracy

Decision matrix

| Factor | OCR | Vision AI | Hybrid |

|---|---|---|---|

| Document format | Identical, fixed | Varies across vendors/layouts | Mixed |

| Document quality | Clean, high-resolution | Inconsistent (scans, photos, faded) | Mixed quality |

| Handwriting | Not supported well | Strong support | Vision AI handles edge cases |

| Tables | Simple, structured | Complex, multi-page, merged cells | Split by complexity |

| Setup and maintenance | High (templates required) | Low (minimal setup) | Moderate |

| Cost | Lowest at scale | Higher per doc | Optimized balance |

How to decide quickly:

- Low variability in documents → OCR is efficient

- High variability → Vision AI is more reliable

- Mix of both → Hybrid gives the best of both worlds

Try Vision AI with Your Own Documents

Parseur uses Vision AI to extract structured data from invoices, receipts, contracts, forms, and more. You can go from document to structured data in minutes: upload a PDF, let Vision AI extract the data automatically, and send it directly to tools like Google Sheets, QuickBooks, or your CRM.

The easiest way to understand the difference is to test it with your most complex or messy document and compare the results with your current setup.

Further reading: Vision AI Document Processing | What is OCR? | AI OCR | AI Document Processing

Last updated on