Tables break traditional OCR, especially when layouts get messy or inconsistent. Vision AI fixes this by understanding structure, not just text, so your data comes out clean and usable.

Key Takeaways:

- Tables break traditional OCR, especially with merged cells and inconsistent layouts.

- Vision AI understands structure, delivering accurate extraction with minimal fixes.

- Tools like Parseur make it practical: no templates, no maintenance, just usable data.

In every business workflow, tables are where the action happens. From invoices and bank statements to scientific reports and shipping manifests, critical data is organized in rows and columns. Yet for most companies, extracting that data reliably remains a significant challenge.

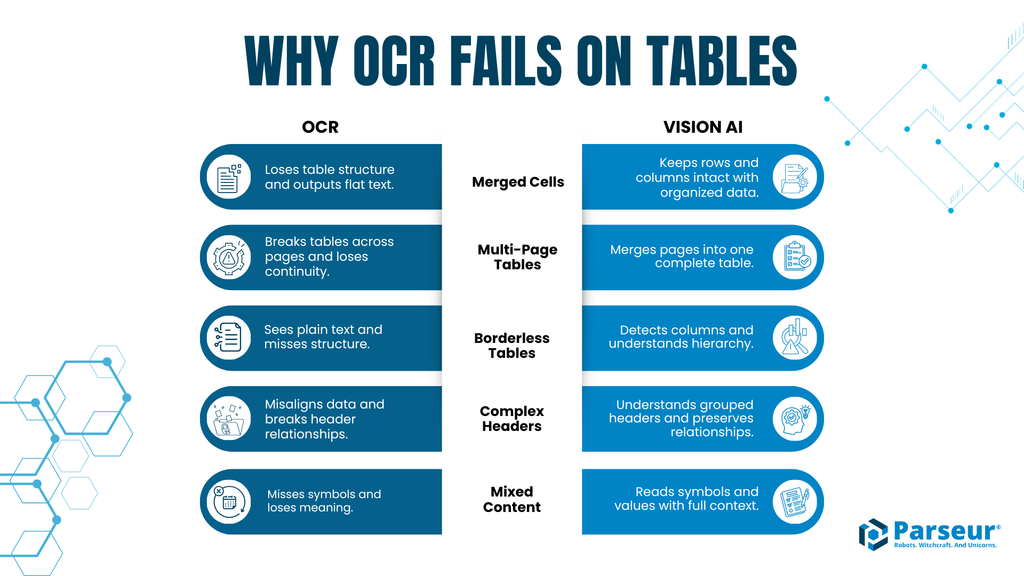

Traditional OCR tools can read plain text, but when it comes to tables, especially complex ones with merged cells, multi-page layouts, or mixed content, they stumble. Misaligned numbers, missing rows, and garbled columns are frustratingly common. For finance teams, operations managers, and researchers, this means hours spent manually correcting errors instead of focusing on analysis and decision-making.

This is why AI table extraction is gaining attention. Vision AI does not just capture text. It understands table structure, relationships, and context, delivering clean, structured data that can feed directly into accounting systems, databases, or analytics pipelines.

In this guide, we cover why tables are the hardest part of document processing, how traditional OCR falls short, and why Vision AI represents a fundamental leap forward.

Tables Are the Final Boss of Document Processing

Your vendor sends an invoice with 47 line items. Your OCR tool runs, and the output looks like this:

- Item #1: Widget A, Quantity: 10, Price: (blank)

- Item #2: (blank), Quantity: $45.99, Price: 5

- Item #3: Completely missing

The original table had merged cells and uneven spacing. OCR reads everything left-to-right, ignoring structure entirely. Now you are stuck manually fixing 47 broken rows. This is exactly where AI table extraction becomes critical.

Why tables break traditional OCR

OCR works well for plain text. But tables are not just text. They are structured data, and that is where things fall apart.

- Merged cells: A header spanning 3 columns gets read as one block of text

- Multi-page tables: Page 2 is treated as a completely new table

- No visible borders: Columns separated by whitespace become jumbled

- Complex layouts: Nested tables, rotated headers, and multi-level columns confuse parsing

- Mixed content: Numbers, text, and symbols in the same row lose alignment

The result is broken rows, misplaced values, and unusable data.

Why this matters

This is not a minor edge case. It is the norm. Over 80% of business documents contain tables, and tables hold the most valuable data, such as invoice line items, transactions, and reports. Traditional OCR table extraction fails 25 to 40% of the time, and manual correction takes 5 to 15 minutes per table. At scale, this becomes a massive operational bottleneck.

The shift to Vision AI

Vision AI does not just read characters. It interprets structure. It understands rows, columns, and cell relationships, enabling accurate AI table extraction even in messy, real-world documents. Instead of guessing where data belongs, it sees the table the way you do.

The 5 Reasons Traditional OCR Fails on Tables

Extracting tables accurately is not just about reading text. It is about understanding structure, context, and relationships. Here are the five key reasons traditional OCR struggles, with examples showing how Vision AI solves them.

1. Merged cells

Take a simple invoice header where "Item Description" spans the first column, and Quantity and Price sit beside it. OCR output collapses the entire row into one string, losing the table's structure entirely.

Vision AI output: Row 1 is correctly identified as a 3-column header. Row 2 maps Item to "Widget A (Red)", Quantity to 10, and Price to $45.99. Structure is preserved and ready for automation.

The key insight here is what OCR loses in the process. When a table is converted through OCR, only the text survives. All information about cell edges, row boundaries, and column relationships is discarded. Vision AI retains that structural information, which is why it can correctly understand which value belongs to which row and column, even when layouts are complex or cells are merged.

2. Multi-page tables

Bank statements often span multiple pages. With 20 transactions on page 1 and 30 on page 2, OCR produces two separate tables with no continuity and lost running balances.

Vision AI output: Both pages are merged into a single 50-row table, preserving sequence and calculations.

3. Borderless tables

Financial statements often use whitespace instead of lines. Revenue figures, sub-categories like Product Sales and Service Revenue, and expense lines appear aligned visually but have no borders.

OCR output: Just text, no hierarchy, relationships lost.

Vision AI output: Two columns (Category and Amount) with parent-child hierarchy preserved, for example Revenue broken down into Product Sales and Service Revenue.

4. Complex headers

Consider a multi-row header where "Q1 2026" spans two sub-columns, Actual and Budget, beneath a Metric column.

OCR output: Misinterprets "Q1 2026" as a data cell, breaking alignment.

Vision AI output: Recognizes the hierarchical headers, correctly mapping Actual and Budget values under Q1 2026 and preserving semantic meaning.

5. Mixed content types

Tables often contain checkboxes, symbols, and numbers in the same row. OCR misses checkmarks entirely and cannot distinguish empty cells from unchecked ones.

Vision AI output: Correctly identifies checkbox states, handles percentage values, and flags empty versus unchecked cells as distinct conditions.

Vision AI's 4-Step Process for Table Understanding

Step 1: Visual layout detection

Vision AI first sees the table as a grid of cells with relationships, not as a sequence of characters.

It detects cell boundaries (even without visible borders), row and column alignment, merged cells and spanning headers, table continuation across pages, and nested tables. Computer vision identifies rectangular regions, whitespace patterns indicate column divisions, and spatial relationships between text blocks are mapped. This ensures that even complex tables are correctly interpreted as structured grids rather than plain text.

Step 2: Structure recognition

Next, Vision AI determines the table type and its organizational logic. It identifies header rows versus data rows, summary rows (totals and subtotals), hierarchical parent-child relationships, and column data types (text, number, date, currency).

By learning patterns from millions of documents, Vision AI understands that the same column label might appear differently across vendors but still maps data correctly. An invoice table always has Description, Quantity, Unit Price, and Total in some form, even if the layout changes.

Step 3: Content extraction

Vision AI extracts text cell by cell, preserving structure and relationships. Unlike OCR, which reads left-to-right and outputs unstructured text, Vision AI retains row and column coordinates, making the output immediately usable for downstream systems.

The result is structured JSON output where every cell has a row, column, value, and data type, ready to import without cleanup.

Step 4: Validation and reasoning

This is where Vision AI separates itself most clearly from traditional OCR. An OCR system outputs characters and stops there. It has no awareness of whether the data it extracted makes sense. Vision AI, as an AI system, can reason on the data it sees and extracts, adding checks and context validation to confirm it has captured the right information.

In practice, this means Vision AI checks the data for logic and completeness after extraction. Validation examples include confirming that row totals equal Quantity times Unit Price, that running balances calculate correctly (Previous balance plus Credit minus Debit), that Quantity columns contain actual numbers, and that critical cells are not empty.

When inconsistencies are found, Vision AI flags low-confidence extractions, suggests corrections based on context, and alerts users to review. This ensures tables are not only read accurately but understood fully. Modern systems achieve 95 to 99% accuracy across document extraction and classification tasks, according to Analytics Insight.

4 Industries Where Vision AI Table Extraction Shines

Vision AI is not just a technical novelty. It delivers tangible results across multiple industries where complex tables dominate business documents.

Use Case 1: Invoice Processing (Accounting and Finance)

The challenge: Companies often receive 100 or more invoices monthly from various vendors, each with its own format. Invoices contain 5 to 50 line items, merged header cells, subtotals, taxes, and discounts. Traditional OCR leaves finance teams manually correcting errors.

What Vision AI extracts: item description, SKU or product code, quantity, unit price, line total, tax amount, and any discounts.

Validation checks: Does the sum of line totals equal the invoice total? Are taxes calculated correctly?

Real example: A mid-sized company processing 500 invoices per month with an average of 15 line items handles around 7,500 table rows monthly. Studies show automation can reduce processing time by over 80%, freeing teams to focus on higher-value work while minimizing errors.

Use Case 2: Bank Statement Processing (Accounting)

The challenge: Bank statements often have 50 to 200 transactions across multiple pages. Each row's running balance depends on the previous one, with debits and credits in separate columns. Dates, descriptions, and amounts vary across banks.

What Vision AI extracts: date, description, debit amount, credit amount, running balance, and category (derived from description keywords).

Real example: An accounting firm processing 100 client bank statements per month uses Vision AI to extract 15,000 or more transactions at 98% accuracy, saving 25 hours per month in manual reconciliation. Poor data quality costs organizations an average of $12.9 million per year, underlining the value of automated, accurate extraction.

Use Case 3: Scientific Paper Data Extraction (Research)

The challenge: Research tables are notoriously complex: nested headers, statistical data spanning multiple rows and columns, footnotes, rotated text, merged cells, and mixed units.

What Vision AI extracts: variable names, test results, statistical significance (p-values), sample sizes, measurement units, and footnote associations.

Real example: A pharmaceutical company extracting clinical trial data from 200 research papers achieves 95% table-data accuracy, cutting manual review time from 80 hours to 12 hours. More than 80% of healthcare data remains unstructured, making manual extraction time-consuming and difficult to scale.

Use Case 4: Financial Statement Analysis (Investment and Banking)

The challenge: Financial reports contain hierarchical tables with Revenue broken into Product Lines and Regions, borderless layouts, and scattered summary rows. Analysts need year-over-year comparisons with calculated margins and ratios.

What Vision AI extracts: line items (Revenue, COGS, Operating Expenses), values by time period, hierarchical relationships, calculated fields (margins, ratios), and YoY growth percentages.

Real example: An investment analyst extracting data from 50 annual filings quarterly reduces extraction time from 3 hours per filing to 20 minutes. Data professionals spend 30 to 50% of their time searching, cleaning, and preparing data, significantly slowing analysis and reporting.

Troubleshooting Table Extraction Issues

Even the best Vision AI systems occasionally encounter tricky tables. Here is how to recognize and resolve common extraction challenges.

Challenge 1: Table not detected

Symptom: Vision AI treats the table as regular text.

Common causes: The table has no visible structure (pure whitespace alignment), the table is mixed with surrounding text, or the table is very small (fewer than 2 rows or 2 columns).

Solution: Adding minimal formatting, such as light gray borders or subtle shading, helps Vision AI detect cell boundaries. Isolating the table from body text reduces noise. Use explicit instructions like "Extract the table starting with [header text]".

Challenge 2: Columns misaligned

Symptom: Data from one column shifts into another (for example, column 3 data appears in column 2).

Common causes: Inconsistent spacing between columns, merged cells breaking alignment, or text wrapping within cells.

Solution: Enable strict column mode in Vision AI. Define the expected number of columns when possible. Review flagged misaligned cells and manually adjust if needed.

Challenge 3: Multi-page tables break

Symptom: Page 2 is treated as a separate table.

Common causes: The header does not repeat on continuation pages, a page break occurs mid-row, or formatting changes on subsequent pages.

Solution: Modern Vision AI automatically detects table continuation. If issues persist, prompt the system with "This table continues across pages 3 to 5". Merge extracted tables programmatically for a single cohesive dataset.

Challenge 4: Numbers extracted as text

Symptom: "$1,234.56" is stored as a string instead of a number.

Common causes: Currency symbols, commas, or percentage signs confuse parsing.

Solution: Vision AI's data type detection typically handles numeric parsing automatically. Configure output to strip symbols and parse as a float. When testing, use your worst documents, including faxed copies, low-quality scans, phone photos at odd angles, and documents with smudges. If Vision AI handles these, it can handle virtually any table.

Why Table Extraction Finally Works

If there is one place where document processing breaks, it is tables. Not because they are rare, but because they are everywhere and messy by default. Merged cells, multi-page layouts, and missing borders are exactly where traditional OCR falls apart. With failure rates often reaching 25 to 40% on complex tables, most teams spend more time fixing data than using it.

Vision AI changes this by approaching tables differently. Instead of reading characters line by line, it understands structure: rows, columns, relationships, and even calculations. The result is 95 to 98% accuracy even on documents that typically break OCR.

That shift has a direct impact. Processing becomes 6 to 10 times faster than manual entry. Costs drop significantly by reducing correction work. And there is no need to build or maintain templates when formats change.

More importantly, it works on the kinds of tables that actually matter: invoice line items, bank transactions, financial reports, and complex scientific data.

Parseur applies Vision AI directly to real workflows, extracting structured data from documents without rigid templates. Upload a document with a complex table, see the data extracted in seconds, and send it directly to tools like Google Sheets, QuickBooks, or Airtable.

Last updated on