Top SaaS investor Tomasz Tunguz of Theory Ventures has validated a hard truth: email parsing is a frontier AI problem, not a simple automation task. When combined with voice transcription and messy data extraction, it requires state-of-the-art systems to operate reliably in production, especially at scale.

Key Takeaways:

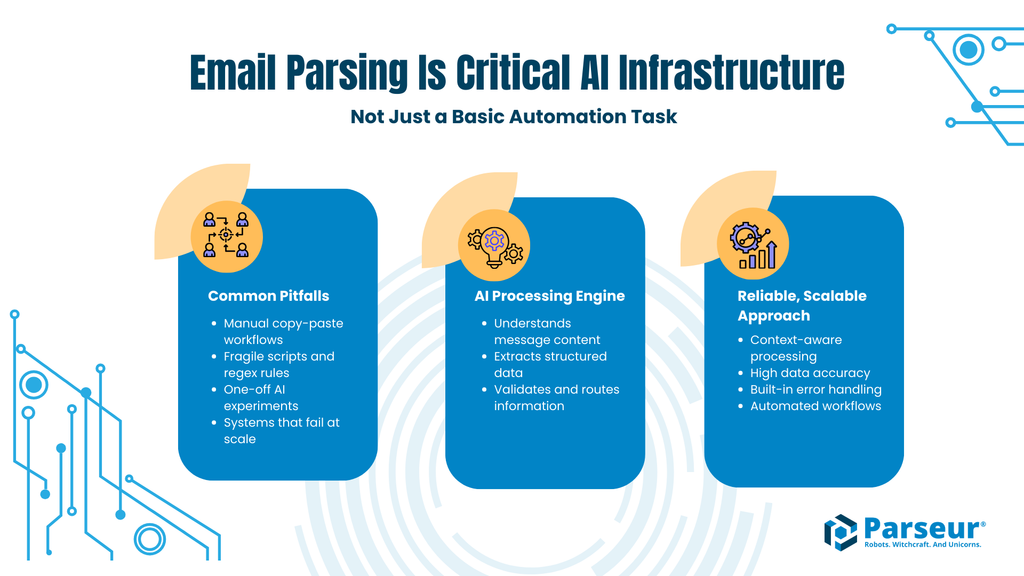

- Email parsing is inherently hard. Real inboxes are unpredictable, ambiguous, and full of edge cases that break basic automation.

- Generic AI is not enough. One-off GPT prompts or brittle rules fail on consistency, cost, and reliability in production.

- Hybrid systems win. Purpose-built platforms like Parseur combine templates with adaptive AI to handle both the predictable and the chaotic.

Why One of SaaS's Most Influential Investors Says Email Parsing Is Harder Than You Think

A top VC just validated what many AI practitioners have known for years: AI email parsing is one of the hardest problems in applied artificial intelligence.

Tomasz Tunguz of Theory Ventures, one of SaaS's most influential investors, known for backing companies like Looker and major infrastructure platforms, recently published "9 Observations from Building with AI Agents." In it, he places email parsing alongside voice transcription and messy data extraction as tasks that require "state-of-the-art" AI systems.

That framing matters.

When investors who fund frontier AI infrastructure publicly identify a problem as genuinely difficult, it signals more than a passing trend. It signals technical depth. It signals production complexity. It signals durability.

Many teams assume email parsing is just simple automation with scripts or regex, but modern AI email parsing operates at a fundamentally different level: it reads and understands text that is already present, rather than reconstructing it from images.

That assumption breaks in production.

Tunguz's observations reveal why intelligent email processing belongs in the category of serious AI agent use cases, and why solving it reliably requires more than basic automation.

When the input is unpredictable, email parsing, voice transcription, and messy data extraction reach for state-of-the-art.

Tomasz Tunguz, Theory Ventures

What Tunguz Actually Said (And Why It Matters)

The Key Observations from Tunguz's Article

Email parsing is not mentioned casually in Tunguz's piece. It is grouped with voice transcription and other chaotic data ingestion tasks, problems known for variability, ambiguity, and production brittleness. Rather than simply converting images into text, modern AI systems aim to understand what a document is about, how its elements relate to one another, and why certain data points matter in context.

That distinction validates what many teams discover the hard way: AI email parsing breaks when treated like simple automation.

Tunguz's second observation reinforces this point. He notes that fine-tuned small models often outperform GPT-4-style zero-shot prompting for well-defined tasks. Purpose-built systems win over generic AI.

The implication is clear: throwing a large general model at email parsing is not enough. Specialized approaches combining structure, training, and contextual reasoning are more reliable. This philosophy mirrors hybrid architectures that blend templates with AI reasoning rather than relying on a single method.

Finally, there is the production reality test. Venture investors see hundreds of polished AI demos that perform flawlessly in controlled environments. Highlighting email parsing signals something different: this is where systems fail at scale. The real test is not whether a demo works. It is whether it survives the chaos of real inboxes.

Why a VC's Perspective Matters

Tunguz was an early investor in Looker (acquired by Google for $2.6B) and has deep experience evaluating SaaS infrastructure companies. Theory Ventures focuses specifically on data, AI, and infrastructure software, not surface-level automation.

VCs filter through thousands of AI pitches. When someone with that exposure calls a category genuinely hard, it is a signal. For buyers and operators, that signal matters. If sophisticated investors recognize the complexity of AI email parsing, so should procurement teams.

When a VC who has seen every AI pitch says email parsing needs 'state of the art,' that is not hype. It is a warning about underestimating the problem.

Why Email Parsing Is Actually Hard

The Unpredictability Problem

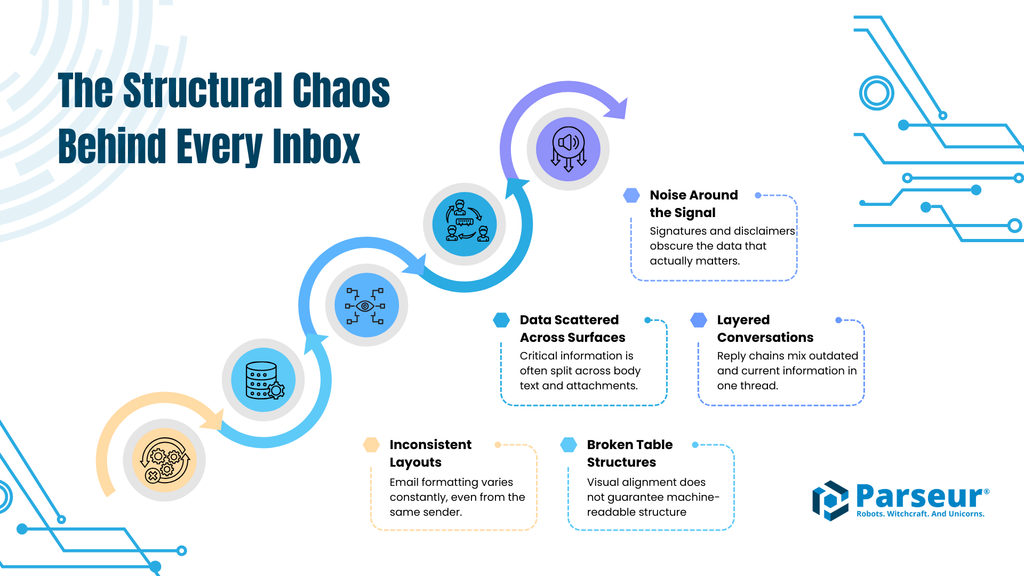

Email is not structured data. It is structured sometimes, semi-structured often, and chaotic more frequently than teams expect. It is communication first, data container second.

At a surface level, extracting fields from an email appears straightforward. In production inboxes, it rarely is.

Format anarchy is the baseline. Emails arrive as plain text, HTML, rich text, or hybrid layouts. Tables are often not true tables, using ASCII formatting or inconsistent spacing instead. Critical data can be embedded inline or buried in attachments. Mobile signatures, legal disclaimers, and thread history all create noise. Forwarded conversations stack multiple contexts in a single message.

Even a single vendor can send five materially different invoice email formats over two years. A minor template redesign, a new footer, a different accounting export: each change introduces failure points for brittle extraction systems.

Then comes semantic ambiguity. "Total: $5,000." Is that the subtotal? Total before tax? Total including fees? "Due in 30 days" versus "Net 30" versus "Payment terms: 30 days from invoice date." Same intent, different phrasing, and different date calculations depending on context.

Multiple dates frequently coexist: invoice date, service period, due date, and email sent date. Humans resolve these ambiguities instantly by applying contextual reasoning. AI systems must infer meaning from structure, placement, and linguistic cues.

And then there is the long tail: forwarded emails with nested historical data, reply chains where only one section reflects the current invoice, corrections like "Updated invoice below, disregard the previous one." These are not rare anomalies. They are routine operational noise. That long tail is where systems either survive or fail.

Why Generic AI Approaches Fail

When teams recognize complexity, many default to large language models. GPT-style general models are powerful, but they are not deterministic systems. Common failure modes include inconsistent extraction (the same email yields slightly different outputs), hallucination risk (invented invoice numbers, dates, or totals), no embedded memory of your historical vendor patterns, and usage-based pricing that compounds at scale ($0.01-$0.05 per email becomes material across thousands of messages).

Probabilistic outputs are acceptable in creative tasks. In accounting or operations workflows, variability becomes risk.

On the opposite end, rule-based extraction appears safe, until it is not. It breaks when formatting shifts, cannot generalize across layout variations, requires constant maintenance, and is fundamentally brittle under ambiguity. Rules are precise, but precision without adaptability fails in environments defined by change. Email parsing breaks at both extremes: too generic or too rigid.

What "State of the Art" Actually Means

When Tomasz Tunguz suggests reaching for "state of the art," it does not mean simply upgrading to the newest large model. It means systems designed specifically for document and email variability.

In practice, that includes models trained on document and email structures (not just conversational text), context-aware extraction that understands relationships between fields, adaptive learning that improves with your organization's patterns, production-hardened exception handling, and consistent, verifiable outputs with validation layers.

State-of-the-art AI parsing requires purpose-built email parsing features designed for variability, validation, and scale. This is the difference between a demo and infrastructure.

Email Parsing Approaches Comparison

| Capability | Generic LLM (GPT-4) | Rule-Based Scripts | State-of-the-Art AI (Parseur-style) |

|---|---|---|---|

| Format handling | Inconsistent | Rigid templates | Adaptive |

| Edge case handling | Unpredictable | Fails completely | Learns and adapts |

| Cost at scale | High ($0.01-$0.05/email) | Low | Comparable per-parse cost, but includes full workflow: ingestion, processing, data delivery, logs, and human review |

| Accuracy | 80-90% | 60-75% | 95-99%+ |

| Maintenance | Ongoing prompt tuning | Constant fixes | Self-improving |

| Production ready | No | No | Yes |

"State of the art" does not mean "newest GPT model." It means purpose-built AI systems engineered to survive production variability. That distinction is what separates automation experiments from operational infrastructure.

The Hybrid Approach: Why Specialized Beats General

Tunguz's Second Key Insight

In his broader commentary on AI agents, Tomasz Tunguz makes a second, often-overlooked observation: fine-tuned small models can outperform GPT-4-style systems on well-defined tasks. That insight has major implications. It suggests task-specific training beats general capability, smaller focused models outperform large generalized models, and domain expertise beats broad surface-level knowledge.

Large language models are designed to handle a wide range of tasks reasonably well. But "reasonably well" is not the standard for production finance or operations workflows.

Email parsing is not an open-ended reasoning task. It is a constrained, repeatable problem: extract structured business data from semi-structured communication. Models trained specifically on invoices, purchase orders, shipping confirmations, and transactional emails consistently outperform general chatbots attempting zero-shot extraction. For applied AI, specialization wins.

The Parseur Philosophy (Validated)

Since 2016, Parseur has taken a hybrid approach that reflects this philosophy. Rather than choosing between rigid templates or unconstrained AI, the system combines both: templates when the structure is consistent, and AI reasoning when variability appears.

This design aligns with real-world email patterns. Most vendors are consistent until they are not. Templates efficiently handle the predictable 80%: recurring invoice layouts, standardized order confirmations, and repeatable formatting. They provide speed and determinism. AI handles the remaining 20%: format changes, branding updates, new vendors, forwarded threads, corrections, and edge cases.

Consider a typical scenario. Vendor A sends invoices in the same layout for months, so template extraction excels. Vendor A then updates branding, the layout shifts, and AI adapts without breaking the workflow. A new Vendor B appears, AI extracts immediately, and an optional template can be created later. A forwarded invoice includes corrections, and contextual reasoning resolves the current data. The result is production-grade reliability: structured enough to trust, flexible enough to adapt.

Why General AI Is Not Enough

The chatbot shortcut sounds appealing: "Just use GPT-4 to extract invoice data." In practice, that approach is often more expensive at scale, less consistent across runs, slower in high-volume workflows, and exposed to hallucination risk.

The real question is operational: can you stake your accounts payable process on it? General-purpose AI struggles to pass that test. Purpose-built document extraction systems are trained on large volumes of real business emails, optimized for speed and cost efficiency, and designed to produce verifiable, auditable outputs. That is the difference between experimentation and infrastructure.

But extraction accuracy is only part of the equation. At scale, businesses also need the surrounding infrastructure: a reliable way to ingest documents from multiple sources, monitor processing in real time, flag exceptions for human review, reprocess individual documents when something goes wrong, and audit every step after the fact. A raw AI API call gives you none of that. Purpose-built platforms like Parseur provide the full pipeline out of the box, so teams spend their time on decisions, not on debugging pipelines.

What This Means for Businesses

Stop Underestimating Email Parsing

When Tomasz Tunguz categorizes email parsing as a "state-of-the-art" AI problem, the takeaway is not theoretical. It is operational.

If frontier AI investors consider it hard, businesses should treat it accordingly. That means:

- Do not assign it to a junior developer as a weekend automation project.

- Do not assume a few regex rules and scripts will scale.

- Do not expect a simple ChatGPT API call to become production infrastructure.

Email parsing touches revenue, accounting, logistics, compliance, and customer workflows. When it breaks, it does not fail quietly. It creates downstream errors.

The smarter approach is to recognize it for what it is: a genuine AI infrastructure problem that requires reliability, adaptability, and guardrails.

Evaluate Solutions Properly

Tunguz's emphasis on unpredictability offers a practical evaluation framework. When assessing vendors, the questions matter as much as the demo.

"How do you handle unpredictable inputs?" Good answer: Adaptive AI with fallback strategies and validation layers. Weak answer: "Our templates cover most cases."

"Are you using general AI or specialized models?" Good answer: Purpose-built, domain-trained systems. Weak answer: "We just call the OpenAI API."

"Show me production accuracy on real email chaos." Good answer: 95-99%+ with documented edge-case handling. Weak answer: "97% accuracy in our internal tests."

"What happens when a vendor changes their format?" Good answer: Automatic adaptation without workflow downtime. Weak answer: "You can update the template."

The goal is not impressive demos. It is resilience under variability.

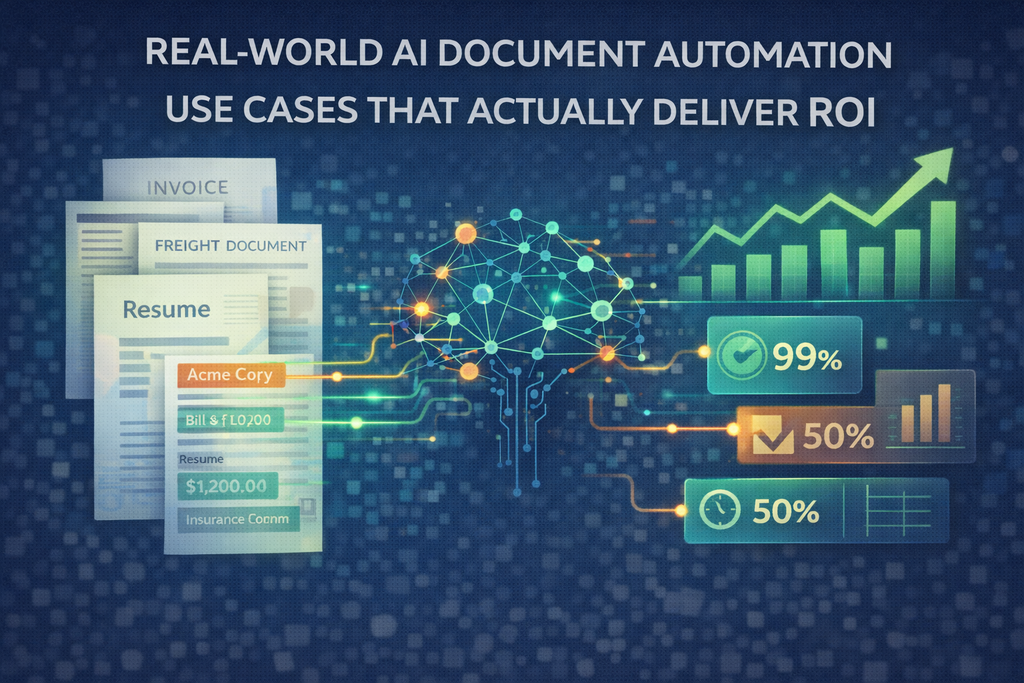

The ROI of Getting It Right

According to a Parseur-commissioned survey of 500 U.S. professionals, organizations are simultaneously confident in their data and regularly finding errors in it, with 88% of respondents reporting errors in document-derived data at least sometimes.

That error rate becomes exception queues. Exception queues require manual review. Manual review erodes automation ROI.

Consider a simplified cost comparison:

- DIY scripts: "Free," but 40 hours per month in maintenance.

- Generic AI API: $500 per month in usage, 10-15% exception rate.

- Purpose-built system: $200-$400 per month, under 2% exception rate, minimal maintenance.

When time, reliability, and downstream impact are included, specialized systems often deliver multiples of ROI. True automation is not "set and babysit." It is "set and trust."

Listen to the People Who Fund the Future

When Tomasz Tunguz of Theory Ventures calls email parsing a frontier AI agent use case, that framing carries weight. He places it alongside voice transcription and messy data extraction, categories known for unpredictability, ambiguity, and production brittleness. His guidance is clear: reach for state-of-the-art systems. And his broader insight reinforces that fine-tuned, specialized models outperform large general LLMs for well-defined operational tasks.

That perspective aligns closely with what Parseur has been building since 2016: hybrid architectures that combine templates with adaptive AI, designed not for demos, but for production reliability.

Email parsing is not simple automation. It is a production AI challenge. For businesses, the takeaway is straightforward:

- Stop treating email parsing as trivial.

- Invest in purpose-built systems.

- Demand production-grade accuracy, adaptability, and consistency.

Accounts payable, procurement, logistics, and operations workflows depend on structured, reliable data. When the investors funding the AI future say email parsing is hard, it may be time to stop treating it like it is easy.

Last updated on